Your AI Study Guide

A structured learning agenda for anyone building AI fluency.

A structured learning agenda for anyone building AI fluency.

The consensus across the major research firms is that executives need at least 20 focused hours of AI learning before they can participate meaningfully in strategic AI discussions. That is a real commitment, and most people have not had the time. This guide is designed to make those hours count. The question it answers is simple: What do I actually need to know, and in what order?

You do not need to be technical. Many readers are executives navigating AI decisions, others are operators trying to understand what is changing and why, and some are founders building from scratch. Whatever your starting point, this guide meets you there.

AI is a vast and intricate universe, and each topic resembles its own galaxy. I spent a long time wandering through it, reading papers, taking courses, following dead ends, refining what mattered and discarding what did not. Two years of intensive study, consulting engagements, and the research that produced The Intelligence Organization later, I have done that work so you do not have to. This guide is the sequence I wish I had started with.

This is a learning agenda, not a course. Each section gives you context, curated resources, and guidance on where to go deeper. The actual learning happens when you follow the links, read the books, take the courses, and use the tools. I have used, read, or evaluated every resource listed here. They are not exhaustive. AI is a field where curiosity rewards exploration. Follow the path, then follow your curiosity.

This is not a "how to use AI in your role" guide. There is plenty of that. This is for people who want to understand the entire sphere: the technology, the economics, the organizational design, the governance, the ethics, the infrastructure. Where teaching content appears, it is there to orient you. Give every section at least a pass, even the ones outside your immediate need.

The topics are organized in a learning progression. Each level builds on the one before it: orienting yourself to the landscape, applying tools to real work, analyzing how others have implemented AI, evaluating strategic decisions, then creating new systems and workflows. Start where you feel comfortable, but do not stay there. Follow the path, then follow your curiosity.

Use the Contents tab (right edge) to track your progress. Mark sections complete as you go. Expand the notes area in any section to capture your own thinking. Your progress and notes are saved locally in your browser — nothing leaves your device.

Short on time? Complete sections 01 (Foundations) and 03 (Productivity) first. That covers 6–8 hours for minimum viable fluency. Then use your Learning Track below to decide what comes next.

Find your role. Follow the track top to bottom. The numbered list is your sequence — start at 1, work down.

How deep each role should go in each section. High = spend real time here. Med = working familiarity. Low = skim or skip.

| Enterprise & Mid-Market | Small Business & Independent | ||||

|---|---|---|---|---|---|

| Section | CEO / Board | VP / Func. | Manager | Small Biz | Solo / Consult |

| Foundations | High | High | High | High | High |

| Data Fundamentals | Med | Med | Med | Med | Low |

| Productivity | Med | High | High | High | High |

| Skill Development | Low | Med | High | Med | High |

| Applied Knowledge | Med | High | Med | High | Med |

| AI for Business | High | High | Med | High | Med |

| Failure Modes | High | High | Med | High | Med |

| Tool Selection | Med | High | High | High | High |

| Value Prioritization | High | High | Med | High | Med |

| Governance & Security | High | High | Med | Med | Low |

| Ethics | High | Med | Med | Med | Med |

| Workflows | Med | High | High | High | High |

| Infrastructure | Low | Med | Low | Low | Low |

| Staying Current | Med | Med | High | High | High |

| AI Agents | Med | High | High | High | High |

| Skills & Automation | Low | Med | High | High | High |

High = core to your role. Med = useful context. Low = reference only.

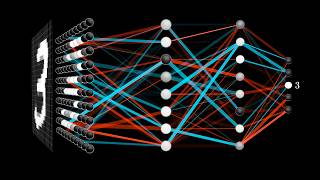

Before diving into the learning journey, orient yourself to the architecture underneath every AI product. You do not need to build at every layer. You need to know they exist.

Interactive Visualizers Inside Each Layer

Each layer below links to a simulation you can explore. Look for "See how this works ↗" — these open interactive walkthroughs that show what actually happens at each level of the stack.

Click any topic to expand. Work through them in order for the strongest foundation, or use the role playlists to prioritize.

After this section, you can follow any AI conversation at the conceptual level and identify which type of AI applies to a given problem.

Your starting point. Learn the core concepts and historical arc of AI: the distinctions between Deep Learning, Machine Learning, and Large Language Models, what Generative AI is, and what multi-modal systems mean in practice.

For this phase, use AI itself as your tutor. Ask Claude, ChatGPT, or Gemini your questions directly. The goal is conceptual fluency, not memorization.

After this section, you will understand why data quality is the #1 reason AI initiatives fail and what to look for in your own organization.

Data is AI's fuel. Understanding how AI consumes and processes data, alongside concepts of data maturity, quality, security, and governance, clarifies both what AI can do and where it breaks.

Use AI for your actual daily work and model AI-fluent behavior for your team.

Before an organization can transform, its people need fluency. This means using AI tools for your actual work. The key is daily use on real tasks, not practice exercises.

Do not wait until you have finished the foundations. Draft a real memo with Claude. Analyze real data with ChatGPT. The difference between understanding AI conceptually and being able to use it comes from working with it on real tasks, repeatedly.

Prompt engineering — structuring inputs to get better outputs — was the entry point for most professionals learning AI. It still matters, but the field is moving past it. As models improve, the bottleneck shifts from how you phrase a single question to what information the model has access to when it answers. The industry is calling this shift context engineering: designing the full set of instructions, documents, tools, and conversation history that a model sees at each step. If prompt engineering is writing a good question, context engineering is building the room the conversation happens in.

This section covers both. Start with prompt engineering fundamentals if you are new to AI tools, then move toward context engineering and role-specific skill building as your fluency grows.

The best way to learn might be simpler than any curriculum: get comfortable prompting and use your tool of choice as a portal into the world. Ask it to explain what you are reading. Ask it to compare two approaches. Ask it what you should learn next. The tool itself becomes the tutor — but only if you use it daily on real questions.

Study what has been done before generating your own ideas. I maintain a research repository you are welcome to use. Google NotebookLM can convert any paper into audio for passive learning.

Research repositories worth monitoring

The core insight from my research: AI transformation is an organizational design challenge, not a technology challenge. Most organizations that fail with AI fail for structural reasons.

These four bands change how you prioritize the rest of this guide. Technology, people, operations, and governance must advance together. Each section from here forward maps to one or more bands.

Fitting technology to what your organization can absorb. See Section 08.

The binding constraint for all other bands. See Section 12.

Embedding AI into connective tissue. See Section 12.

The immune system. See Section 10.

Studying what goes wrong is as valuable as studying what goes right.

Dozens of disconnected pilots, no portfolio governance, no compounding learning. The cure: portfolio prioritization.

AI deployed faster than the organization can absorb. The cure: pace deployment to your actual ability to absorb change.

Employees adopting tools without governance. The cure: clear boundaries + fast lanes. See Section 10.

Catalogs failure patterns, provides diagnostic frameworks, introduces the Intelligence Organization Method and Starkey Model.

Learn more at rbdco.ai →The AI tool landscape expands faster than any individual can track. Audit what you already have before buying new.

The Starkey Model maps use cases against value potential and implementation feasibility.

High value, high feasibility. Execute immediately.

High value, lower feasibility. Build capability first.

Lower value, high feasibility. Don't let these consume resources.

Revisit when conditions change.

EU AI Act in effect. US policy shifting quarterly. Organizations that build adaptive governance now will not need to retrofit.

Six governance nodes from The Intelligence Organization. Click to expand:

Competence-based authority, not hierarchical sign-off.

Intent-based guardrails, not prescriptive rules.

Continuous monitoring, red-teaming, provenance tracking.

Real-time feeds, not quarterly audits.

Sociocratic consent, not mandated compliance.

Every person deploying or using AI should understand its ethical challenges and follow policy developments.

Not everyone adopts AI the same way. Recognizing adoption personas changes how you approach change management.

Already experimenting. Channel productively. Equip, don't constrain.

Curious but cautious. Pair with Pathfinders for peer mentoring.

Want to use AI but policy prevents it. Clarify boundaries. Quick win.

Demand proof. Only converted by outcomes they care about.

Understanding supply-side dynamics helps you interpret announcements and anticipate shifts in what becomes possible and affordable.

After this section, you will know what an agent actually is, when to use one, and why they introduce a new class of governance decisions.

Agents represent the shift from AI-as-tool to AI-as-collaborator. A chatbot responds to a single prompt. An agent reads files, calls APIs, executes code, handles errors, and chains multiple steps to complete a goal. Claude Code is an agent. So are the systems behind automated customer service, code review pipelines, and research workflows.

The distinction matters for leaders because agents introduce a new class of decisions: what should AI be allowed to do autonomously, what requires human approval, and how do you govern systems that take action? These questions map directly to Governance (Section 10) and the Adaptive Governance framework in The Intelligence Organization.

After this section, you will know how to build reusable AI skills, connect Claude to your existing tools, and set up workflows that run on their own.

Section 15 covered what agents are. This section covers how to make them work for you repeatedly. The real leverage comes from building things you configure once and use indefinitely.

Three layers, each building on the last:

AI moves quarterly. Below are the sources I rely on, organized by cadence.

Claude Code is Anthropic's command-line interface for Claude. It runs in your terminal, reads and writes files on your machine, and maintains context across sessions. I use it daily to build research, manage projects, and run analysis with my full knowledge base loaded.

This section covers the tools and techniques that compound your learning over time. Each one builds on the last.

Claude Code is Anthropic's agentic coding tool. It operates in your terminal (Mac, Linux, or WSL on Windows). When you open it in a project folder, it reads the files around it — and you can point it at any file, folder, or URL on your machine.

Key capabilities:

CLAUDE.md file in any directory auto-loads as context every session. Your instructions, preferences, and knowledge persist without re-explaining.A few concepts that appear throughout this guide and in any AI workflow. You need a terminal and basic comfort with command-line navigation (cd, ls, mkdir). If that sentence is unfamiliar, start with Codecademy's free command line course (2 hours). You also need:

Installation: npm install -g @anthropic-ai/claude-code then run claude in any directory. Full setup: Quickstart guide

Instead of starting every AI conversation from scratch, build a persistent knowledge base that Claude loads automatically. This is the single most powerful learning technique I have discovered. Structure matters more than volume — a well-organized knowledge base of 20 files will outperform 200 unstructured documents.

Start by downloading this study guide and saving it to your machine. Then create a dedicated directory around it. This becomes your AI workspace:

~/ai-brain/CLAUDE.md — Auto-loaded context. Contains: who you are, what you are working on, how Claude should behave in this directory. This is the file that makes Claude feel like it "knows" you.~/ai-brain/ai-studyguide-2026.html — This guide. Downloaded, local, always available. Claude can reference it, search it, and help you navigate it.~/ai-brain/APPLY.md — Your action log. Every time something in this guide sparks an idea — a workflow you want to build, a leadership application, a concept you want to deepen, a tool you want to try — write it here. Tag each entry by source section and date. This is not a notebook. It is a queryable backlog of what you want to do with what you are learning. Claude reads it every session and can help you prioritize, connect ideas across entries, and execute. Over time, this file becomes the bridge between learning and doing.~/ai-brain/learning/ — Notes from courses, books, and articles. One file per major topic. Use tables and tagged lists, not paragraphs — structured data is more useful to Claude than prose.~/ai-brain/research/ — Summaries of papers and reports. A well-structured 5KB file beats a 50KB document dump.~/ai-brain/weekly/ — Weekly AI briefings (automated or manual). Date-stamped. Over months, this folder becomes a searchable timeline.~/ai-brain/projects/ — Active work contexts. One subfolder per project, each with its own CLAUDE.md.Add a MANIFEST.md at the root that indexes everything: what exists, what questions each file answers, when it was last updated. Claude reads this first to navigate your knowledge base.

The APPLY.md is the file most people skip and later wish they had started sooner. When you ask Claude "what did I want to follow up on from the governance section?" — it has the answer. When you want to review everything you have flagged for your team — it is all in one place. Move completed items to a Done section at the bottom. Do not delete them. That history is your record of growth.

Start here, in this order:

~/ai-brain/ and add a CLAUDE.md with your name, role, and what you are learningclaude in that directory and ask it to help you organize your first set of notes from this study guideDocumentation: Overview · Skills · Scheduled tasks · Prompt engineering

In nearly every conversation I have, I am asked the same question. How do you keep up?

These are my answers. Each takes 15–30 minutes and produces outsized results relative to the effort.

Use Claude Code's scheduled tasks to generate a recurring weekly briefing. Structure: breakthroughs, business implications, policy changes, notable launches. Save to ~/ai-brain/weekly/. Load into NotebookLM for audio overviews.

It takes about fifteen minutes to configure, and from then on it replaces hours of manual scanning each week. Over months, your weekly/ folder becomes a searchable timeline of AI developments filtered through your priorities.

When you find a valuable resource, add a structured note to your knowledge graph — one sentence on why it matters, tagged by topic. Feed papers and reports into Google NotebookLM to query across your collection and generate audio overviews. Over time, the pattern recognition that comes from organized curation is what produces strategic insight — not the individual articles themselves.

Create a folder called ~/ai-brain/. Inside it, create a file called CLAUDE.md. Write three things: who you are, what you are learning, and what kind of help you want from Claude. Open Claude Code in that directory. Every future session starts with context instead of a blank page. This takes about five minutes to set up and it changes how every subsequent conversation with Claude works.

Block 90 minutes per week specifically for AI learning. Protect this time the way you would protect a meeting with your CEO. Use it to work through one section of this guide, explore one new tool, or read one substantive report. Twenty minutes a day, consistently, will get you further than a quarterly deep-dive.

RBD. is an enterprise AI capability advisory firm. We redesign how organizations work so AI delivers compounding value across governance, operating models, people capability, and technology architecture.

Founder Megan C. Starkey brings over 15 years of experience leading enterprise transformations across revenue-driving functions and organizational design. She is the author of The Intelligence Organization, which introduces the Intelligence Organization Method and the Starkey Model. Megan is a partner in the Netrii advisory network.

Our Intelligence Center publishes research briefs, strategic insights, and proprietary frameworks.

Community: Women Build the Future · MN Women in AI

This guide builds your fluency. The offerings below take it further.

Guided practice on your real work, at your pace.

Published research and proprietary frameworks for leaders making AI decisions.

Proprietary framework mapping use cases against value potential and implementation feasibility. Produces a prioritized portfolio. Self-serve or consulting-led.

Explore the model →Founder-led engagements: capability assessment, AI operating model design, governance architecture, workforce development.

See the method →Take these with you. Share them with your team.

Complete visual map of the Intelligence Organization Method: four bands, three waves, seven swimlanes.

Download PDFQuestions, feedback, what resonated, what's missing — I read and listen to everything.

If you want to talk through what you are learning or where to focus next, I am available.

Schedule a Conversation Visit rbdco.aiAgent — An AI system that takes actions autonomously: reading files, calling APIs, executing code. Claude Code is an agent.

API — Application Programming Interface. How software systems communicate. AI APIs let developers integrate models into applications.

AGI — Artificial General Intelligence. Hypothetical AI that can perform any intellectual task a human can. Does not exist today.

Attention Mechanism — The architectural innovation behind transformers. Allows models to weigh relevance of different input parts when generating each word.

Benchmark — Standardized test for evaluating model performance. MMLU (knowledge), HumanEval (coding), HellaSwag (reasoning).

Chain-of-Thought — Prompting technique: ask the model to show reasoning step by step. Improves accuracy on complex tasks.

CLAUDE.md — Markdown file auto-loaded by Claude Code in a directory. Stores persistent context, instructions, preferences.

Context Window — How much text a model can process in one conversation. Claude: 200K tokens (~500 pages).

Copilot — AI assistant embedded in a software tool. GitHub Copilot, Microsoft 365 Copilot, Salesforce Einstein. A UX pattern, not a technology.

Deep Learning — Machine learning using neural networks with many layers. The approach behind all modern LLMs and image generators.

Diffusion Model — Architecture behind Midjourney, DALL-E, Stable Diffusion. Generates images by reversing a noise-addition process.

Embeddings — Numerical representations of text that capture meaning. Used in search, recommendations, and RAG systems.

Fine-tuning — Training a foundation model further on specialized data. Most orgs use prompting and RAG instead.

Foundation Model — Large general-purpose model trained on broad data, then adapted for specific tasks. Claude, GPT, Gemini, Llama.

Function Calling — API feature letting models request execution of predefined functions rather than just generating text.

GPU — Graphics Processing Unit. The hardware that trains and runs AI models. NVIDIA dominates. Availability and cost shape viability.

Guardrails — Constraints on AI systems to prevent harmful or off-topic outputs. System-level, organizational, or prompt-embedded.

Hallucination — When a model generates confident-sounding information that is factually wrong. Mitigated by RAG and human oversight.

Inference — Running a trained model to generate outputs. Distinct from training. Per-token pricing determines deployment economics.

Knowledge Graph — Structured representation of entities and relationships. In AI workflows, organized files giving AI persistent, queryable context.

Latency — Time between request and response. Smaller models and edge deployment reduce it.

LLM — Large Language Model. Architecture behind Claude, GPT, Gemini, Llama. Trained on text to predict and generate language.

Markdown — Plain text formatting syntax (.md files). Models read and write it natively.

MCP — Model Context Protocol. Open standard for connecting AI to external tools and data.

Multi-modal — Models that process multiple input types: text, images, audio, video.

Open Weight — Models whose weights are publicly downloadable (Llama, Mistral). Training data and code may not be shared.

Prompt Engineering — Structuring inputs to get better outputs. System prompts, few-shot examples, chain-of-thought, role-setting.

RAG — Retrieval-Augmented Generation. Connecting a model to external data so it references your documents.

RLHF — Reinforcement Learning from Human Feedback. Aligns models to human preferences.

Skill — Reusable instruction set for Claude Code. Encodes procedural knowledge. Build once, invoke by name.

System Prompt — Hidden instructions sent before the user's message. Sets behavior, constraints, format.

Temperature — Controls randomness. 0 = deterministic, 1 = creative. Lower for facts, higher for brainstorming.

Token — The unit AI models process. Roughly ¾ of a word. Pricing and context limits are per-token.

Transformer — Neural network architecture behind all modern LLMs. Google, 2017. Attention mechanisms process sequences in parallel.

Vector Database — Database optimized for storing and searching embeddings. Pinecone, Weaviate, ChromaDB.

Megan C. Starkey is a member of the Netrii advisory network

Megan C. Starkey is a member of the Netrii advisory network